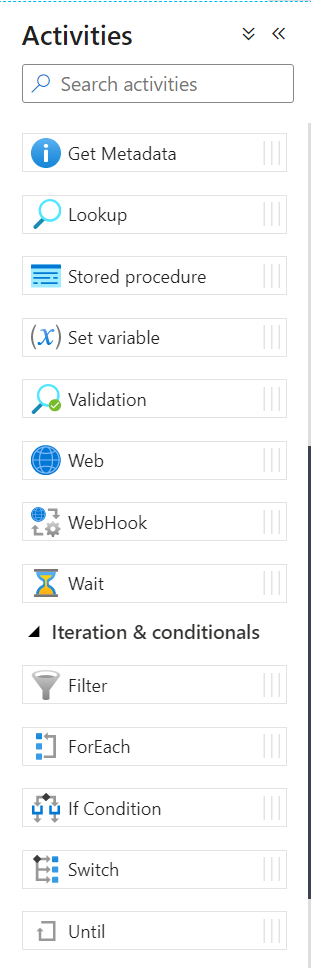

Control Flow activities in Data Factory involve orchestration of pipeline activities including chaining activities in a sequence, branching, defining parameters at the pipeline level, and passing arguments while invoking the pipeline. They also include custom-state passing and looping containers.

If you’ve been using Azure Data Factory for a while, you might have hit some limitations that don’t exist in tools like SSIS or Databricks. Knowing these limitations up front can help you design better pipelines, so I’m listing a few here of which you’ll want to be aware.

- You cannot nest For Each activities.

Within a pipeline, you cannot place a For Each activity inside of another For Each activity. If you need to iterate through two datasets you have two main options. You can combine the two datasets before you iterate over them. Or you can use a parent/child pipeline design where you move the inner For Each activity into the child pipeline. Fun fact: currently the Data Factory UI won’t stop you from nesting For Each activities. You won’t find out until you try to execute the pipeline. - You cannot put a For Each activity or Switch activity inside of an If activity.

The Data Factory UI will prevent you from doing this by removing the For Each and Switch from the activity list. You can redesign the pipeline to put the inner activity inside a child pipeline. Also note that you can put an If activity inside of a For Each activity. - You cannot use a Set Variable activity inside a For Each activity that runs in parallel.

The Data Factory UI won’t stop you, but you’ll quickly learn that the scope of the variable is the pipeline and not the For Each or any other activity. So you’ll just overwrite the value in no particular order as the activities execute in parallel. The workaround for this is specific to your use case. You might try using an existing attribute of the item you are iterating on instead of setting a variable. Append Variable works fine, since each loop could add a value. But again, don’t count on the order being meaningful. - You cannot nest If activities.

The Data Factory UI will prevent you from nesting the If activities. If you need to have two sets of conditions, you can either combine conditions or move the inner condition to a child pipeline. - You cannot nest Switch activities.

Similar to the If activity, the Data Factory UI will prevent you from nesting Switch activities. And again, you can either combine conditions or move the inner condition to a child pipeline. - You cannot put a For Each or If activity inside a Switch activity.

The Data Factory UI will prevent you from doing this. You can move the inner activity to a child pipeline if needed. - You cannot use an expression to populate the pipeline in an Execute Pipeline activity.

It would be great to design a truly dynamic pipeline where you could have a dataset that defines which pipelines to execute, but you can’t do that natively in the Data Factory UI. The Invoked Pipeline property doesn’t allow dynamic expressions. If you need to dynamically execute pipelines, you can use Logic Apps or Azure Functions to execute the pipeline. - You cannot dynamically populate the variable name in Set Variable and Append Variable activities.

The Data Factory UI only allows you to choose from a list of existing variables. As a workaround, you could use an If activity to determine which variable you will populate. - The Lookup activity has a maximum of 5,000 rows and a maximum size of 4 MB.

If you need to iterate over more than 5000 rows, you’ll need to split your list between a child and parent pipeline.

In addition to the items mentioned above, also note these resource limits listed in Microsoft Docs. Limits like 40 activities per pipeline (including inner activities for containers) can bite you if you aren’t careful about implementing a modular design. And if you do have a modular design with lots of pipelines calling other pipelines, be aware that you are limited to 100 queued runs per pipeline and 1,000 concurrent pipeline activity runs per subscription per Azure Integration Runtime region. I don’t hit these limits too often, but I have hit them.

This is not to say you can’t create good solutions in Azure Data Factory—you absolutely can. But Data Factory has some limitations that you might not expect if you have experience working with other data integration/orchestration tools.

Have you hit any other limits that caused you to design your pipelines differently that you would like to share with others? If so, leave me a comment.

One Comment