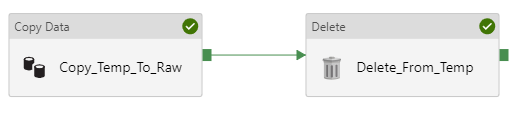

Data Factory can be a great tool for cloud and hybrid data integration. But since its inception, it was less than straightforward how we should move data (copy to another location and delete the original copy).

It is a common practice to load data to blob storage or data lake storage before loading to a database, especially if your data is coming from outside of Azure. We often create a staging area in our data lakes to hold data until it has been loaded to its next destination. Then we delete the data in the staging area once our subsequent load is successful. But before February 2019, there was no Delete activity. We had to write an Azure Function or use a Logic App called by a Web Activity in order to delete a file. I imagine every person who started working with Data Factory had to go and look this up.

But now Data Factory V2 has a Delete activity.

How the Delete Activity Works

The Delete activity can delete from the following data stores:

- Azure blob storage

- ADLS Gen 1

- ADLS Gen 2

- File systems

- FTP

- SFTP

- Amazon S3

You can delete files or folders. You can also specifiy whether you want to delete recursively (delete including all subfolders of the specified folder). If you want to delete files/folders from a file system on a private network (e.g., on premises), you will need to use a self-hosted integration runtime running version 3.14 or higher. Data Factory will need write access to your data store in order to perform the delete.

You can log the deleted file names as part of the Delete activity. It requires you to provide a blob storage or ADLS Gen 1 or 2 account as a place to write the logs.

You can parameterize the following properties in the Delete activity itself:

- Timeout

- Retry

- Delete file recursively

- Max concurrent connections

- Enable Logging

- Logging folder path

You can also parameterize your dataset as usual.

All that’s required in the Delete activity is an activity name and dataset. The other properties are optional. Just be sure you have specified the appropriate file path. Maybe try this out in dev before you accidentally delete your way through prod.

To delete all contents of a folder (including subfolders), specify the folder path in your dataset and leave the file name blank, then check the box for “Delete file recursively”.

You can use a wildcard (*) to specify files, but it cannot be used for folders.

Here’s to much more efficient development of data movement pipelines in Azure Data Factory in V2.

Hi Meagan, I was ready one of your earlier artical regarding ADF pipeline using Visual Studio and ARM templates .. Is there support for ARM template or scripting ADF pipelines for ADF V2. If you get time can you do a quick writeup regarding ADF v2 , Data Flow and best way to setup pipelines. That would be really helpful !!

Highly appreciate that you share your experience for others to learn and benefit .. keep up the good work

Finally 🙂 we where using a web api to delete the files on our blob. And there where some issues on our production blob. Timeouts over 30 secs what even microsoft cant solve. This seems to be working like a charm.

Where was this like 3 weeks ago when I needed it??? Actually, this is what I love about DF. You log in one day and there’s a new feature that you need. Like yesterday I login and you can search the contents of objects. Did you noticed the search bar in the header? 😉

Having an issue with the Delete activity. With my json code promoted to Prod, any time someone else pushes changes to prod, the Activity type for my Delete activity changes to say just Activity. Anyone else have this issue or know of a fix?